Deploying Prompts

Access Controls

Sharing level

Each prompt has a sharing level that controls who can see and use it. Set it from the Sharing tab on the prompt.

| Level | What it means |

|---|---|

| Private | Only you can see and use this prompt |

| Chat only | Anyone in the organization can run it from the chat launcher, but it does not appear in the Prompts library |

| Library View | Anyone can find it in the library and use it in chat, but cannot edit it |

| Library Edit | Anyone can find it, use it in chat, and edit it |

Start with Private while building and testing. Move to Chat only to make it available in chat without exposing the configuration. Use Library View for stable, shared prompts your team shouldn't modify. Use Library Edit for prompts you want the team to collaboratively maintain.

You can also share with specific individuals rather than the whole organization - add them by name or email from the Sharing tab.

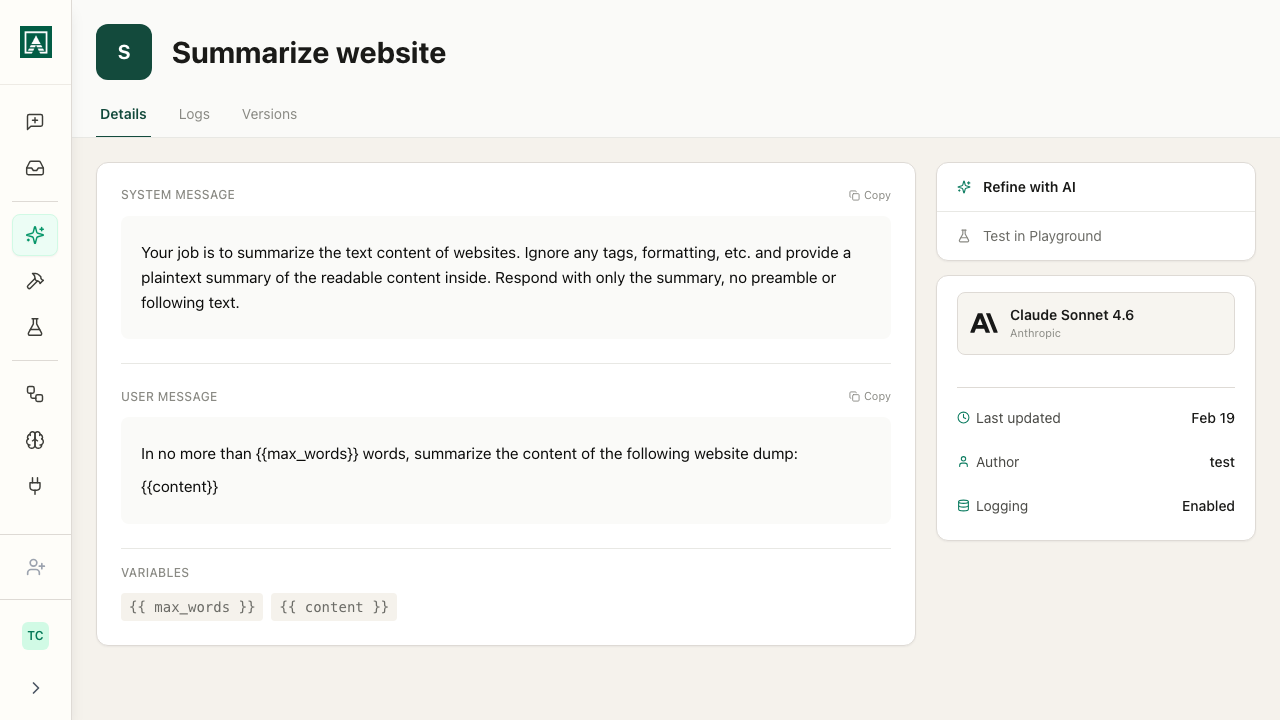

API access

Enables calling the prompt via HTTP from external systems. When turned on, the prompt detail page shows the endpoint URL and request format with your specific variables pre-filled.

Pass variable values as JSON in the POST body. Include your access key in the Authorization header if required.

The same prompt serves both chat users and API callers - changes to the prompt take effect in both contexts immediately.

Versioning

Every save creates a new revision. The active version is what chat users and API callers receive. You can pin a specific version to an API deployment so code integrations are not affected by ongoing prompt edits.

See the Versions tab on any prompt to review revision history, compare changes, and roll back.

Refining Prompts

The Refine with AI button on a saved prompt opens a chat with the prompt pre-loaded. Use it to iterate on instructions conversationally - describe what you want to change and the model suggests or applies revisions. Changes can be saved as a new version.

Logs

The Logs tab records every execution. See Prompt Logs for details on what is tracked and how to filter by source, status, and version.

Checklist before org-wide deployment

- Tested with realistic variable inputs

- Edge cases handled (short input, long input, missing fields)

- Name and description are clear to someone who did not build it

- Display message set if the full user message is long

- Data logging configured appropriately for the sensitivity of the data

- Chat and library access levels set correctly

- At least two people have run it and confirmed the output is useful