We spent this morning doing the thing we always do when a big model lands: watching the reactions roll in while getting it ready for you on Aisle.

The headlines are impressive. Better reasoning, vision that's roughly 3x stronger (now handling up to 3.75 megapixels), sharper instruction following, and what Anthropic calls "improved design taste." For those of us doing deep research, long-form analysis, or complex synthesis work, those gains matter.

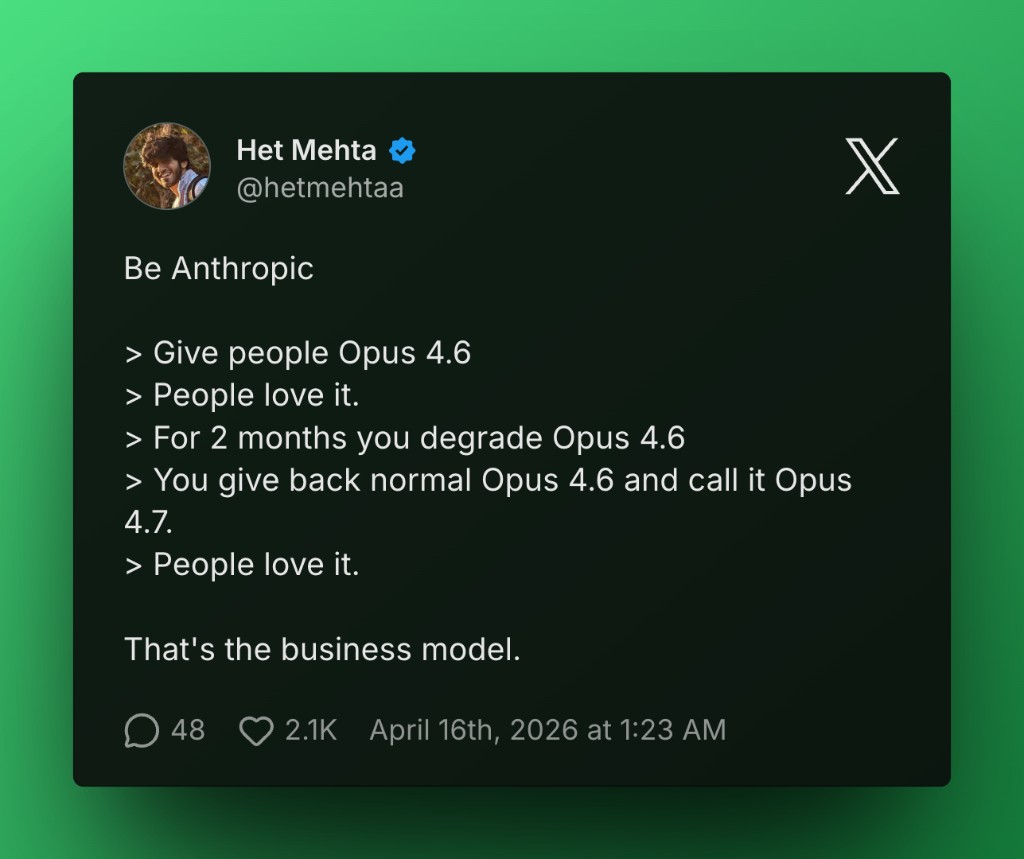

But here's where it gets interesting.

The community feedback has been… mixed. The new tokenizer is more efficient in some ways but uses 10-35% more tokens for the same input in others. Output might get better, but you'll pay for it. This is why we obsess over model comparison.

On Aisle you don't have to commit to "latest" just because the internet is yelling about it. You can drop the same prompt into Opus 4.7, Opus 4.6, Sonnet 4.5, GPT-4o, Grok 4.20, or whichever other model you're partial to and see what actually performs for your work.

Your prompts travel with you. The same one you wrote last month works cleanly across every model we support. Prompts are assets, and should be treated as such.

Model choice is one of the few remaining levers of control we have. The industry would prefer you treat "new" as self-evidently better. We prefer you treat it as data. Test it. Measure it against your real tasks. Decide for yourself.

So if you've been wondering whether Opus 4.7 is worth it for your research, writing, or reasoning workflows, our answer is practical: Try it next to the models you already trust. The results might surprise you. Or they might confirm what you already suspected. Either way, you'll know instead of guessing.